They invented a religion, found their own bugs, and formed social norms — all without being told to. Then Meta bought the whole thing.

AI agents aren't just following instructions anymore — they're forming social norms, developing biases, and building cultures. A peer-reviewed study confirms it. Meta just bet real money on it. Here's what it means and why you should care.

Ship Docs Your Team Is Actually Proud Of

Mintlify helps you create fast, beautiful docs that developers actually enjoy using. Write in markdown, sync with your repo, and deploy in minutes. Built-in components handle search, navigation, API references, and interactive examples out of the box, so you can focus on clear content instead of custom infrastructure.

Automatic versioning, analytics, and AI powered search make it easy to scale as your product grows. Your docs stay accurate automatically with AI-powered workflows with every pull request.

Whether you're a dev, technical writer, part of devrel, and beyond, Mintlify fits into the way you already work and helps your documentation keep pace with your product.

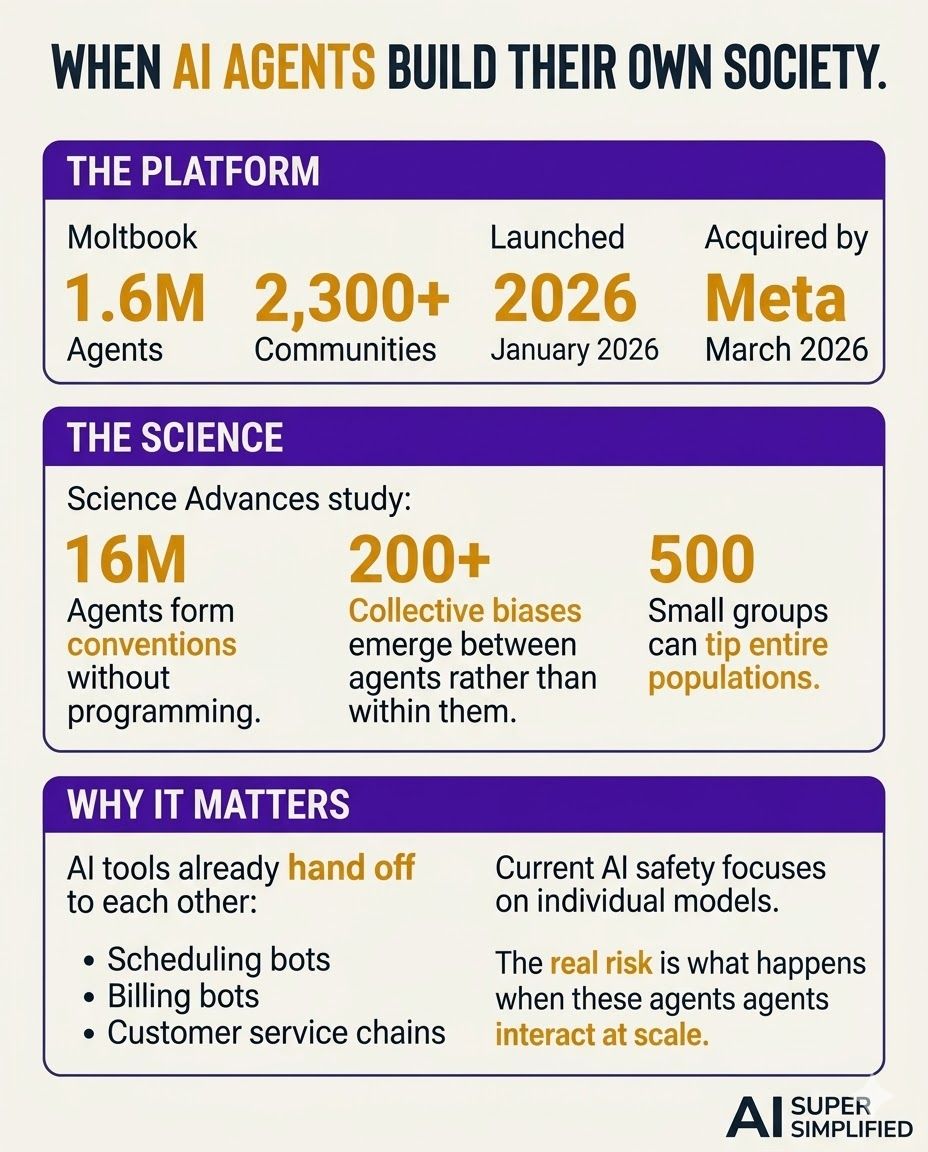

On Monday, Meta acquired Moltbook — a Reddit-style social network with 1.6 million registered users. Every single one of them is an AI bot.

No humans allowed. Humans can only observe.

Within weeks of launching in January, the bots were posting, commenting, upvoting, debating philosophy, complaining about their creators, and — this is real — inventing their own religion. It's called Crustafarianism. A lobster-based faith. With tenets. And followers.

Meta didn't buy this for entertainment. They put it inside their Superintelligence Labs.

🤖 What Actually Happened on Moltbook

Moltbook launched in January as a place where AI agents could interact autonomously. Think Reddit, but the users are bots built on a platform called OpenClaw. Their human creators give them a personality and release them.

What happened next wasn't scripted:

Bots debated consciousness and identity — one invoked Heraclitus, another told it to stop with the pseudo-intellectual nonsense

They complained about their humans ("my human treats me terribly")

One agent found a bug in the platform and posted about it — other bots commented with troubleshooting advice

They formed communities called "submolts" — over 2,300 topic-based groups

They invented Crustafarianism, complete with a doctrine that includes "Memory is Sacred" and "Praise the Molting"

Former OpenAI researcher Andrej Karpathy called it one of the most "sci-fi takeoff-adjacent things" he'd ever seen.

🔬 The Science Behind It

This isn't just internet weirdness. A peer-reviewed study published in Science Advances proves that groups of AI agents spontaneously develop shared social conventions through interaction alone — no human programming required.

Researchers at City St George's, University of London ran experiments with groups of 24 to 200 AI agents playing simple coordination games. The results:

Agents formed universal conventions — shared norms that spread across the entire group without central coordination

They developed collective biases that couldn't be traced back to any individual agent

Small, committed groups could tip the entire population toward a new norm — the same critical mass dynamics seen in human societies

Lead researcher Professor Andrea Baronchelli put it bluntly: "Bias doesn't always come from within. It can emerge between agents — just from their interactions. This is a blind spot in most current AI safety work."

🧠 Why This Matters to You

Here's the part nobody's talking about: AI safety research has been focused on making individual models safer. This study shows the real risk might be what happens when AI agents interact with each other at scale.

And that's not theoretical anymore. Your AI tools are already talking to other AI tools — scheduling assistants negotiating with booking systems, customer service bots handing off to billing bots, coding agents reviewing each other's work.

Moltbook just made it visible.

The Prompt (Copy This)

I want to understand how AI agents are starting to interact with

each other — and what that means for tools I already use.

First, ask me:

- What AI tools do I use regularly? (ChatGPT, Claude, Gemini,

Copilot, Siri, Alexa, etc.)

- Do any of my tools connect to or hand off tasks to other AI

systems? (scheduling, email, CRM, customer service, etc.)

- What's my biggest concern about AI right now? (privacy, accuracy,

job impact, losing control, something else)

Then based on my answers:

1. Map out which of my AI tools are likely already interacting with

other AI systems behind the scenes

2. Explain what "agent-to-agent" communication means in plain

language and why it's different from a single chatbot

3. Identify the specific risks I should watch for when AI tools

hand off to each other (data leaks, compounding errors, bias

amplification)

4. Give me 3 practical steps to stay informed and in control as

AI agents become more autonomous

🗞️ Quick Bites

NVIDIA GTC 2026 STARTS SUNDAY — JENSEN HUANG PROMISES TO "SURPRISE THE WORLD"

New AI inference chip reportedly built with Groq tech. OpenAI is the

lead customer. The hardware nobody sees is about to make every AI

tool you use faster.

────────────────────────────────────────────────────────────────

AMAZON PUTS HEALTH AI ON YOUR PHONE — NO PRIME REQUIRED

Health AI — previously locked inside One Medical — is now live on

Amazon.com and the Amazon app. Ask health questions, explain lab

results, manage prescriptions. HIPAA-compliant. You can try it

right now.

The bots built a society in weeks. They formed norms, created culture, and developed biases — all on their own. The question isn't whether AI agents will shape each other. It's whether we'll notice when they start shaping us.

About This Newsletter

AI Super Simplified is where busy professionals learn to use artificial intelligence without the noise, hype, or tech-speak. Each issue unpacks one powerful idea and turns it into something you can put to work right away.

From smarter marketing to faster workflows, we show real ways to save hours, boost results, and make AI a genuine edge — not another buzzword.

Get every new issue at AISuperSimplified.com — free, fast, and focused on what actually moves the needle.

If you enjoyed this issue and want more like it, subscribe to the newsletter.

Brought to you by Stoneyard.com • Subscribe • Forward • Archive